The Network as a Whole

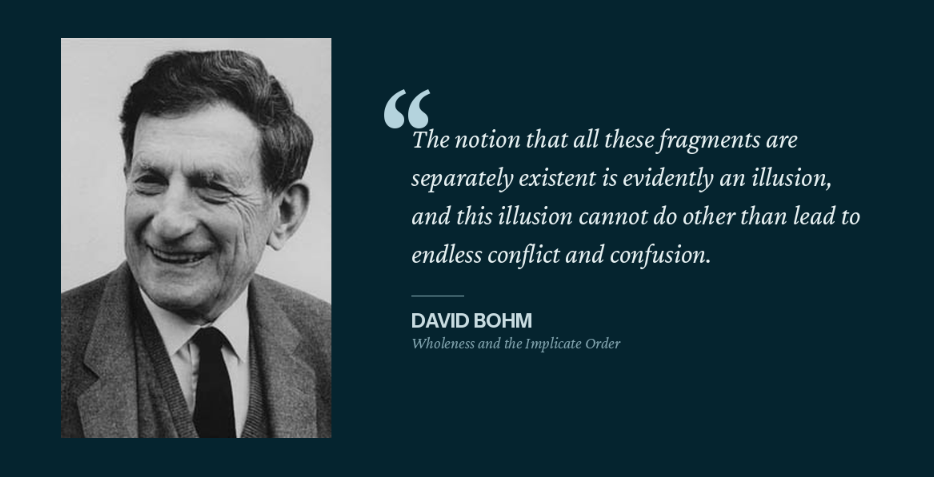

In 1980, a quantum physicist named David Bohm published a book that had absolutely nothing to do with network engineering.

Wholeness and the Implicate Order argued that reality isn't a collection of separate parts that happen to interact. It's fundamentally whole and what we perceive as separate things are temporary elements from a deeper, enfolded order. Bohm called this deeper reality the implicate order and the visible, measurable stuff we interact with the explicate order.

I've spent a while working with networks and I'm increasingly convinced Bohm was describing something every network engineer has felt in their gut but maybe didn't have language for: the network is a single living system and our insistence on treating it as a collection of discrete parts is the source of a lot of our pain.

The Map Is Not the Territory

We love diagrams. Clean boxes, tidy lines, color-coded VLANs, neatly separated layers. They make us feel like we understand the system. I'm not suggesting they're not useful, but the diagram is explicate order. It shows you what you can see and label.

The actual infrastructure itself is implicate order. It's a system where every element carries information about and responds to the whole. BGP peering policy doesn't just affect routing, it shapes traffic patterns that influence traffic forwarding decisions that affect application performance that determines how users experience your network that drives the business outcomes your executives care about. It's all one continuous thread.

We've built entire operational models (change management, incident response, capacity planning, etc) on the assumption that we can meaningfully isolate components and most of the time, the fiction holds up well enough. Until it doesn't.

Fundamentals as the Implicate Order

Something I wish that I understood earlier in my career is the protocols, the design patterns and the best practices you're learning aren't just checkboxes on a certification exam, they're the enfolded logic that determines how everything behaves as a whole.

- STP doesn't just prevent loops on a switch, it establishes the geometry of an entire layer 2 domain.

- OSPF doesn't just find the shortest path between two routers, it creates a shared model of reality that every participating device uses to make forwarding decisions.

- BGP isn't just about exchanging routes, it's a trust and policy framework application that shapes how traffic flows across autonomous systems that you may never directly touch.

These protocols interoperate, depend on one another and collectively form the underlying order of your network. Change one and you've changed the whole.

Design patterns work the same way. Proper layer 3 boundaries, redundancy models, security zones, traffic classification are the structural decisions that create (or destroy) coherent behavior across the system. When someone follows a solid design pattern, they're establishing an implicate order that makes the network behave predictably as a unified thing. When someone ignores those patterns (or doesn't know they exist), they're building a system whose hidden order is chaos and said chaos has a bad habit of surfacing in wee hours of the morning.

The "Tangibles Trap"

Early career engineers almost universally fall into what I'd call the "tangibles trap" and you know what, it's not their fault. It's how we teach this stuff.

We train people on interfaces. How to configure a VLAN. How to set up an OSPF area. How to write an access list. Each task is presented as a discrete, self-contained action. The CLI reinforces this when you're configuring a specific interface on a specific device. The mental model this creates is one where the network is a collection of independent boxes that happen to be connected by cables which works fine when you're learning but becomes dangerous when you're operating.

The engineer who only understands the tangibles in a vacuum can follow run books and work tickets but when something breaks in a way that doesn't match a known pattern, they're stuck. They don't understand why STP converges the way it does, so they can't predict what happens when a link fails in an unexpected place. They may not yet understand how DHCP relay, VLAN tagging, trunk negotiation and upstream routing all interact, so a "simple" change spirals into an outage they can't explain.

The engineer who understands the fundamentals (I mean really understands them, not just passed a test on them) is operating at another level that can see the system as more of a whole. They can predict cascading effects because they understand the hidden relationships between protocols. They know that touching OSPF cost metrics here will shift traffic there, which will affect queuing on that transit link, which will impact real-time applications for that business unit. They see the whole because they understand how the parts are actually interdependent on one another.

This is why I tend to beat the drum about learning best practices and protocols at a fundamental level (i.e. Read the RFCs). Understand why things work the way they do, not just how to configure them. The vendor implementation is great to know, the protocol specification is much more important. Design patterns that emerge from deeply understanding how protocols interact is where you start seeing the whole.

When Isolation Fails

Here's a scenario that most every network engineer has lived some version of.

You make a "simple" VLAN change. Let's say you add a new VLAN to the network. Easy change, limited scope and approved by the boss. Except the new VLAN added has properties that subtly shifts your STP topology. Which nudges root bridge elections. Which picks a new root bridge. Which alters network paths. Which makes traffic patterns change to shift to a single overloaded link. Which shows up days later as intermittent drops and users say "this Wi-fi sucks!" when we all know it isn't the wi-fi at all.

An engineer who deeply understands network behavior might have predicted some of those downstream effects and had some checks in place after the change to verify. Not because they're psychic but because their knowledge of the fundamentals gives them access to the whole picture. They can trace the hidden connections before making the change.

Bohm argued that fragmentation isn't just an intellectual error, it's an approach that generates its own problems. When you treat a whole as if it were parts, the whole responds to your fragmented approach with correspondingly fragmented results.

The Abstraction Tax

Abstraction is how we manage complexity. Without it, we'd never get anything done. OSI layers, subnets, security zones and management planes are all useful fictions that let us reason about impossibly complex systems.

But abstractions have a cost in that they can make us blind to how things actually work together. The less you understand about the fundamentals underneath the abstraction, the more dangerous that blindness becomes.

This is a real risk of the industry's current path. We're producing engineers who can deploy overlay networks without understanding the underlay. Who can configure intent-based policies without understanding what the network actually does to reach that intent. Each layer of abstraction distances the operator from the implicate, which is fine... as long as everything works. It's catastrophic when it doesn't because the person responsible for fixing it can't see below the surface.

The engineer who built intuition over years of troubleshooting is doing something different. When a veteran says "something feels wrong here" before any alerts come in, they're responding to patterns which exist that they've seen or understand because of domain knowledge. That intuition is accumulated sensitivity to the system, built on years of understanding how protocols and design patterns actually behave together.

What This Means in Practice

So if networks are fundamentally whole systems, what do we actually do differently to accommodate?

- Learn the protocols, not just the products - Vendor certifications teach you how to configure a specific implementation. That's necessary but insufficient. Understanding the protocol itself (its assumptions, its failure modes, its interactions with other protocols) is what gives you access to the implicate order of the network.

- Stop assuming isolation will protect you - Change management that focuses on minimizing blast radius is useful, but it should be paired with an acknowledgment that blast radius is a best guess. The network will decide the actual blast radius, not your SOP. Build in observation time after changes. Watch for downstream effects in places you wouldn't expect and test, test, test. Take notes and learn from anything gleaned post change that you might have missed in the planning stages.

- Value the generalists. The engineer who understands routing, switching, wireless, security and a bit of application behavior is seeing more than the specialist who knows BGP inside and out but has never touched a wireless controller. Deep expertise matters, but breadth of perception matters more in complex systems. The industry has been trending toward specialization for decades which IMHO, is detrimental on the whole.

- Mentor with wholeness in mind - If you're senior, stop teaching juniors what to configure and start teaching them why the network behaves the way it does. Show them the cascade effects. Walk them through how a protocol interaction in one part of the network surfaces as a user complaint somewhere else entirely. Help them see the whole early so they don't spend a decade stuck in the tangible trap.

Growing, Not Building

Networks aren't assembled from parts, they're grown from interdependent patterns.

This line of thinking changes how you approach design, troubleshooting, automation and even hire. If you view your infrastructure as a machine made of parts, you'll try to fix it by replacing parts. If you view it as a living system with an underlying order, you'll try to understand why it's behaving the way it is before you start swapping components.

If you're early in your career, take this as encouragement: the time you invest in understanding fundamentals is not wasted time. It's not busy work that you'll outgrow once you move into management or architecture. It's the foundation that lets you perceive the network as whole rather than fragmented. Every RFC you read, every lab you build, every protocol interaction you trace end to end is building your capacity to operate at the network as a whole. That investment compounds for your entire career.

Bohm spent his career trying to convince physicists that the universe wasn't a collection of particles bumping into each other, it is an undivided flowing whole. He mostly failed during his lifetime because the physics establishment preferred their fragments but his ideas kept finding traction in unexpected places: biology, consciousness research and organizational theory. Might be a stretch, but perhaps the tenets apply to network engineering as well.